Sogeti Executive Summit 2015

Theme: Computer Says No

Click here to go to the page for the Summit 2019.

In October 2015, an international group of over a hundred CIOs, senior executives and world renowned speakers gathered at one of the premier hotels in Stockholm to discuss the latest in Machine Intelligence. The summit covered all dimensions of this hot topic, with a line-up of economists, a philosopher, professors, CIO’s, startups and analysts. Interactive debates and discussions brought up the contradictions and challenges in a lively manner. This page will give an overview of what was discussed and describe the insights that were shared amongst the attendees. It provides a starting point for further exploration.

Opening: Hans van Waayenburg, CEO Sogeti and Michiel Boreel, CTO Sogeti

Hans van Waayenburg welcomed the attendees and opened the summit: “This is the tenth time that we organize this event and we’re honored and proud that we have over one hundred people from our important clients here today in Stockholm”. By way of introduction to the program, mr. van Waayenburg highlighted perhaps the most important question around the topic: “AI, is the next big thing and that’s what we’ll talk about for two days. But is this just an economic opportunity or a threat to humanity?”

Michiel Boreel, host of the event, continued: “Let’s talk about AI. Why are we so fascinated by this topic? If you’ve been to our previous summits, you know that a lot of what we do with SogetiLabs is SMACT: Social, Mobile, Analytics, Cloud and ‘Things’. Last year we discussed about what disruptions come to your industry. But if you combine all these technologies, you get to AI – Artificial Intelligence. And it’s interesting that there are so many paradoxes around this topic. This already started in the fifties when John McCarthy organized the first conference around the topic in Palo Alto, about a computer that could think like a human being. At the same time, across town, was Douglas Engelbart. He also looked at AI, but said it’s not about computers that think like us, but about computers that augment us. Like a prosthesis for our brains. Ever since then, these two ways have coexisted: Artificial or Augmented intelligence.

The second paradox is created by Hollywood. Only in the last year we’ve seen seven movies about Artificial Intelligence and for some reason, it always ends bad for the human. We fall in love, the AI escapes, and even the terminator is back. Robots are supposed to help us, but in the movies we always seem to fight them?

Or there’s the controversy about jobs: will it create jobs or take our jobs? This too started in the fifties. According to urban legend, union leader Walter Reuther was invited by Henry Ford II to tour the new factory full of robots. Henry Ford quipped and said ‘Your unions are over! Do you think these robots will pay union fees?’ To which Walter answered ‘Do you think they will buy cars?’

Day 1 - Part One: The Slow Explosion of Artificial Intelligence

AI seems to be exploding; attention is enormous, among venture capitalist but also in major blockbuster movies. Yet, at the same time, we’ve been working at it since the 1950-ies. There have been many times of major interest followed by a fallback. Will it be different this time?

Setting the Stage - Menno van Doorn

Director SogetiLabs - Sogeti Netherlands

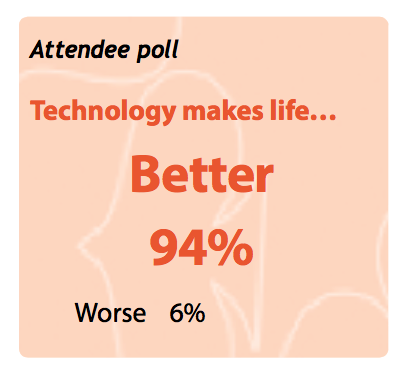

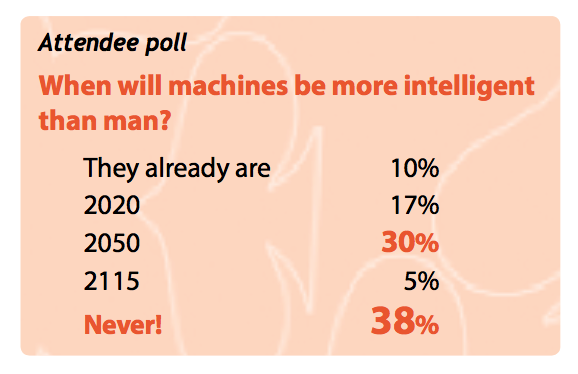

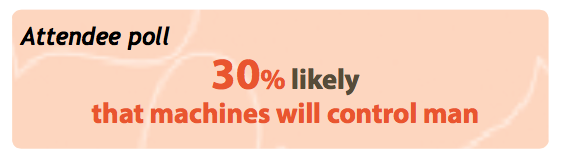

Something is definitely happening today, but we still have to figure some things out. Progress is made when three things combine: technically possibility, economically feasible and socially desirable. And sometimes when man and machine work together it can still become a nightmare. Imagine standing in front of a desk, and behind the desk is Carol Beir, from Little Britain. You’ve ended in a typical ‘Computer Says No’ scenario, where seemingly simple requests are all denied because the computer (supposedly) says no. I think it’s in these type of situations that we dream of an age of truly intelligent decision machines. Can this be achieved? Already computers are answering the phones, pretending to be human. Even when you ask to speak to a supervisor, you still get a computer. Will machines eventually become more intelligent than man?

This has been the theme of many science fiction books and movies. For example dial ‘F’ for Frankenstein, which is about a phone system. When it grows up, it starts to take control of society. Or ‘Her’, in which the main character falls in love with his automated assistant.

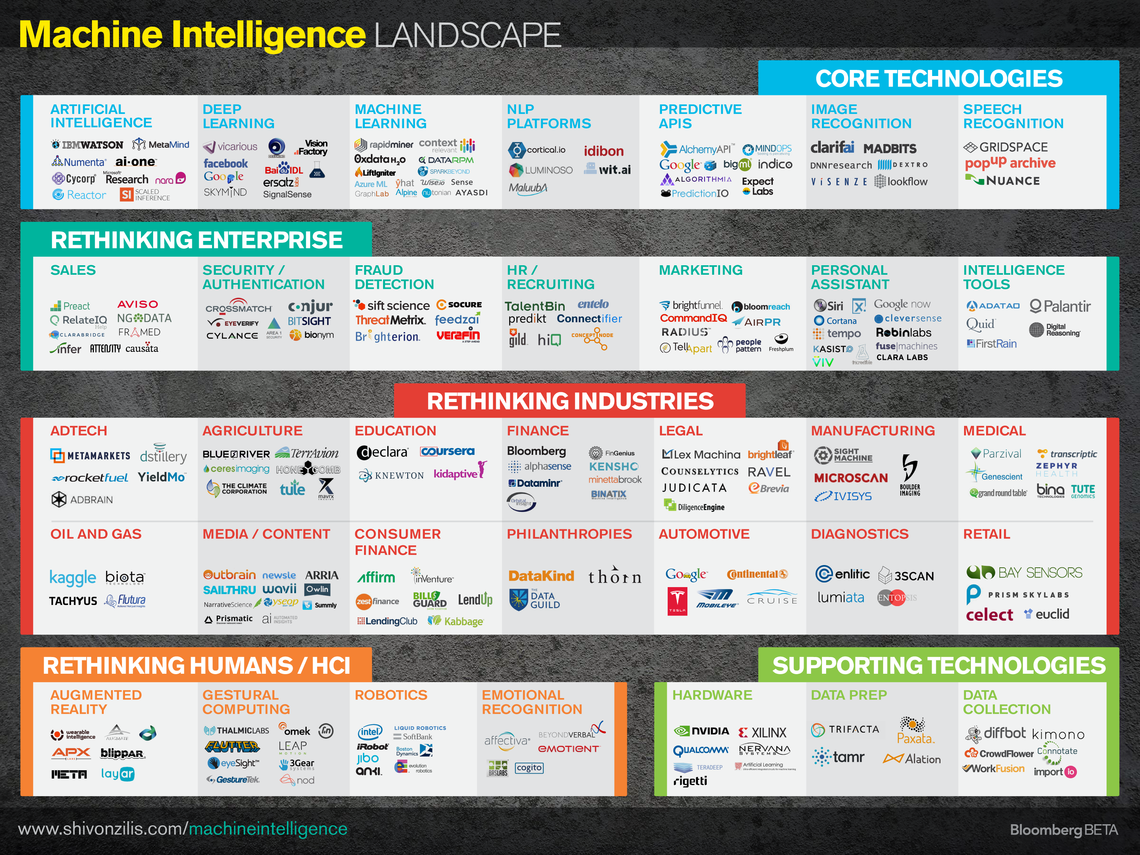

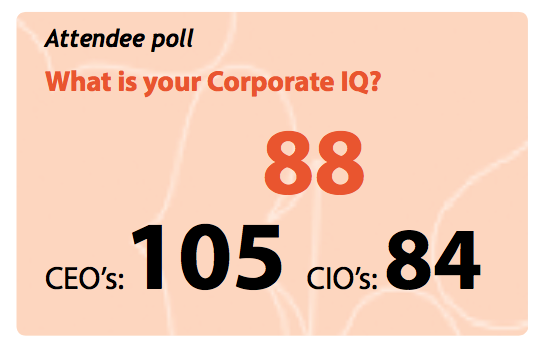

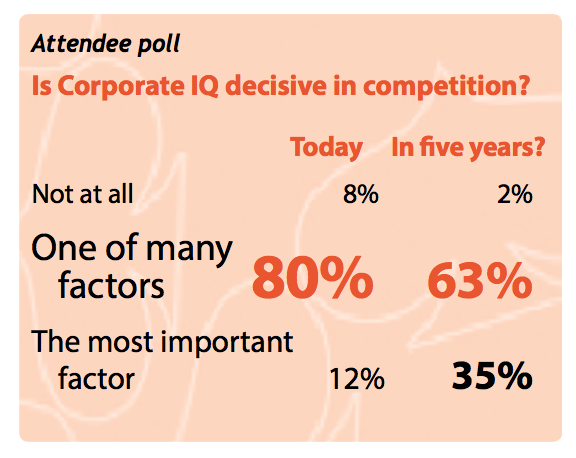

So what are we talking about? There are many things under the umbrella of Machine Intelligence: Weak AI that is narrow and sometimes shallow, strong AI that aims to be person-like, Applied AI that simply tries to solve problems. Will this be good for your company? Will you be able to increase your corporate IQ? Will it be good for you as a citizen, or for society as a whole?

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

We’ve definitely entered a new era of possibilities. There is RoboHow: a robot you could, for example, ask to flip a pancake. It will go to Wikipedia and look up ‘pancake’ and ‘flipping’ and learn to flip a pancake that way. Machines are starting to learn from other machines, and there are surprises every day. Soon you will be able to meet Spencer, a robot, at Schiphol airport, guiding people and answering questions. What will happen if Spencer is walking around? In Osaka a similar robot was teased and kicked by children, so it learned some defensive tactics, such as staying closer to adults or trying to get away. Social consequences.

We are really celebrating something. Kevin Kelly has a simple statement: the next 10.000 startups will be ‘take x and add artificial intelligence’. Then again, in the whole period of 60 years of AI there have been summers of optimism and winters, where we said ‘it will never happen’. Are we now in for a hot summer? There are many things that are technically possible and some of which are even financially feasible. But only when it’s socially acceptable will things really happen.

(Source: www.shivonzilis.com/machineintelligence )

Your Cognitive Future – Steve Mills

Group Executive IBM

Technology naturally builds on everything that was created before. Innovations of today are built on top of innovations of the past. It leads to exponential growth and innovation. We keep making things smaller, more mobile. Our interest and curiosity never ends, and with more data and compute power, we can understand the world around us better. AI learning systems fit into this desire. And the insights that we’re trying to find are probabilistic not mathematics: it’s not like a spreadsheet. We correlate, relate, infer from an ever-growing amount of data, driven by statistical relations, derived using various AI techniques that have been around for along time.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

Microchips (with now over 5 billion transistors on a chip) are easy to string together to build a powerful computer. They give you the option to mimic the way the human brain works. Mimic, not copy, because we humans have over a 100 billion neurons with trillions of synapses that only use 20 watts of power. Way beyond any computer today.

The world of cognitive will not replace the compute world as we know it, but wash over it. It allows you to attack problems that were difficult or impossible to program against. Programming is rules. Instead you let the machine discover, and if it knows the entities, attributes and relationships, it knows all. Not ‘know’ literally, but it could give the answer to any question you might have. It’s able to associate anything new. It finds its way to the best probable answer for the next thing you introduce, including the evidence and statistical background around the answer. That’s what these systems do. And it used to be prohibitively expensive to do, but no more.

IBM’s Watson started playing jeopardy. It was an outgrowth of many discussions around technology that we worked on since the seventies. A team came together around an idea, one evening in a bar, and jeopardy was on TV. They agreed to work on something fun. We put all the pieces together, trained and tuned, and won the final. Up until sixty days before that final match, we were still losing. But fill it with enough data, definitions, attributes and you get a hockey-stick effect: the machine begins to demonstrate what appears to be intelligence. On ten racks of servers, using 80000 watts of electricity. It doesn’t compare to the human brain.

It’s a system of individual systems. In it are dozens and dozens of different systems: language parsing, pictures, movies. We’ve been adding all kinds of ways to see, to reason in language, related to culture and the problem set. There are all kinds of reasoning engines, and we pick off the ones that are most relevant. And we now use Watson in many areas. For example in oncology, to do diagnoses based on all current and historical research, sometimes even spotting treatment options that previously went undiscovered.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

What’s the downside of this development? A disconnect around knowledge. A lack of effective employment related to knowledge. What would a student need to know to later have a good job? There is a disconnect today: companies are getting more efficient, but employment stays behind. Capital dollars are used to automate jobs that are no longer needed. AI is beginning to eat into the white-collar positions. It puts lots of pressure on governments in the world.

Still, I’m optimistic: I’m looking forward to technology that will help us address the large challenges in environment, medicine, deep quality of life. It’s all about getting value from data, and still 80% of the time in an AI project is spent on data curation. I foresee a great, steady, demand for data scientists. There won’t be a company in the world that will not have some kind of cognitive projects in 2020.

Unleashing the Next Wave of Innovation - James McQuivey

Principal Analyst - Forrester Research

I would like to talk about the inevitability of autonomous machines. People are motivated by fear of change, which usually stands in the way of adoption, but other mechanisms are at play that counter this fear completely. What we end up with is Hyper-adoption. Nothing stands in the way of autonomous machines being embraced by mankind.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

Isn’t adoption hard? Yes! That’s the point of my whole research, addressing the question ‘How do you get people to do something new?’ Because clearly it’s hard. Yet still people stand up and talk about ‘change will come’. If Autonomous machines are so inevitable, why are we so afraid? Popular entertainment shows us we are worried or even terrified that they may change our environment. (For example in the movie ‘ex_machina’). The question is, will we overcome our fear?

Our brain is wired to be careful, fearful of change. When something new comes along we automatically look at it as a potential threat or as way to incur a loss: Spend $1000 on something that I’m not so sure about? I don’t want that. Loss aversion is real. But something is countering it: Digital disruption. Digital disruption reduces loss. In old business, before digital, there was a cost in every innovation. In old business, only few people could bring just a few disruptive ideas to market. “Bigger TV’s are now possible!” You’d wait, prices would go down and then you’d buy.

Now the economics are inverted: many more people bring much more innovation. Kickstarter and Indigogo have countless examples. We are continuously offered new things that are free or nearly free. Everywhere you go: free apps, free experiences. Our kid’s world is filled with zero cost offers, surrounded with low cost offers. Many, many changes without any cost or fear. Kids don’t even process the fear: no potential buyers remorse, because they are not a buyer. It allows us to begin using intelligent machines without even knowing it. You’ve not even perceived it, because there is no significant cost of doing it.

Welcome to hyper-adoption: a world that is full of self-driving cars, autonomous robots, hololenses, … Autonomous machines kick in when hyper-adoption goes system wide. And I don’t even need a real robot, I can just start with some sensors, some data and build some new solutions on that: connecting diabetes patches to insulin pumps for example. We can see how this is likely to evolve. We see which platforms are well positioned to become powerful. For example, let’s start with Amazon Alexa. When I order something through Alexa, it connects to another robot in the warehouse. And we’re close to a next step, where a robot drone could delivers at home. The thing is: there is no risk and it has the potential to make me happy. This is how robots will take over the world, making us happier. We ask them to solve our problems, they do it and we’re happy.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

Economics make it easier and easier to keep adding sensors. Now we pour all the data into the cloud, and with a simple credit card we can access and analyze the data on all the sensors.

At no point do we trigger the fear in people: people will love it! Yes, there are some challenges in government regulation, but the main questions we will ask are ‘Where can I get more sensor data?”, “Where do I have permission to access data?” and, most importantly “What kind of intelligence can I create with that?”

Day 1 – Part Two: Our Race against the Machine

Is it really a race? And if so, what is the status: who will win? Man or Machine?

Persuasive Technologies – Maurits Kaptein

Assistant Professor Robotics and Machine Learning - Radboud University

I work on artificial systems that can sell things. I research how machine learning and AI can be used to increase the effectiveness of ecommerce platforms. AI is here already. We see it in search, finding the best results. Amazon has machine learning to recommend products. I’m interested in the psychology of things. How is this evolving?

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

Ask yourself: How do I make eCommerce more effective? When you think about wanting to make people do (or buy) something, you have to ask why people do things in general. It’s actually simple: they have to be motivated, have an opportunity and ability to do. A simple, but handy check: helps explain future. How likely is it that I will throw a brick through a window? Ability: yes. Motivation: hard to see. Opportunity: no. Conclusion: not very likely.

Of these three, I’m particularly interested in motivation. Or in other words: how do I motivate or influence people? There is actually good research on persuasion methods. People prove to be sensitive to these triggers:

Social proof: people do what others do. Regardless of what you ask, simply if others did it so will you. Consequently, online products are presented as best seller, with lots of likes. In an experiment it turns out that it’s easy to have people give a blatantly wrong answer to a question, as long as others did so first.

Authority: People follow authorities. (The famous Milgram experiment, where people readily applied electrical shocks to test subjects when instructed to do so by an ‘authority’ in a lab-coat)

Scarcity: when something is running out, people will want to buy/pay more.

What we then can do is follow people around online, and based on earlier behavior built persuasion profiles: detailed information about how susceptible someone is to each different type of persuasion (social proof, authority or scarcity).

You can then use this information to sell, for example when booking hotels the site can show scarcity, but they can also use the fact that the Lonely Planet (an authority) recommends it. Making choices which arguments to use to persuade you.

This is just one example of lots of work in this space. E.g. Facebook is actually mining their feed and what people write, relating it to personality. Now your personality determines which recommendations you get and what advertisements you see. Using the knowledge to shape ‘your’ Internet. We’re not building robots that are walking around, but building technology that is used to influence people.

The web is the greatest telescope for human behavior that we’ve ever built. The insights are fun and valuable, but could they also be abused? Perhaps. On the other hand there have been good and bad sales people around forever, and even they didn’t succeed in selling everything to everybody.

Socially Intelligent Robots – Vanessa Evers

Professor Robotics and Machine Learning - Twente University

I work with robots, computers and platforms. Progress is everywhere. There is an exoskeleton that helps someone walk by thinking about walking. There is a shopping assistant robot, for a mere 2000 euro’s, while traditionally these would cost at least 100000 euro’s. Now suddenly a lot of people can start building services, and there is no science degree necessary. Or even simpler: a tele-presence platform, a machine that represents someone and that can move around with remote control. These are hugely underrated, as the business case is much easier to make: imagine doctors visiting patients in different hospitals. There are many applications for it.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

What do they all have in common? They are being used in social environment. And the challenge becomes to build robots with social intelligence. If you want to build a system that works with people, you have to understand how people work. If we want technology to fit seamlessly into our life, we have to know and follow the social norms. So that’s what we did: we examined how people move around and manage their personal space. We spent hours and hours watching people and patterns at Schiphol Airport and then run contextual analyses studies. We’re also analyzing speech to discover patterns that computers can detect, for example to determine the most dominating speaker. When we can detect, we can build a robot.

So what kind of things can we find? We can recognize emotions from facial analyses, even outside, and we can see on video which speed-dating couples would indeed ‘click’ (turns out the variation in distance between them is an important indicator). We can use social intelligence and put it into a machine. For example a robot that helps paraplegic people eat. It helps people participate at the dinner table without needing to ask help. We built a simple module that listens to the table conversation, and when there is a pause, it’s a good moment to offer another bite of food. Simple but very powerful.

It’s fun to examine how people interact with robots. Ask a team to solve a problem, and the one person present through tele-presence is automatically given more authority. So the goal is not to just translate human behavior to robots; we need new research to find out how robots need to interact. Now finally machine learning can do the low level navigation, so we can focus on higher level, the social dimension.

What we’re learning is that for example care-robots have so much agency that people are starting to see ‘internal motivation’ in them. We see meaning easily. “That one is trying to help me”, “It’s trying to pick something up”. When you have any robot surface, you have to think about what the robot communicates. And it’s a continuous interplay, of how robots and people react.

We noticed how quickly people adjust to a robot instead of a person. When robots are roaming freely, people play: they make selfies or try to frustrate or challenge the robots. Long term studies on care-robots show that people get annoyed with robots all the time, but that patients do stick better to their health program (for example an exercise or medicine regime).

So are we close to the movie-type humanoid robots? Well, in natural language processing, cloud processing and energy storage we are making great leaps forward. It could go very quickly. On the other hand: it’s really hard to build a robot. And realizing a robot that looks exactly like us is perhaps a bit arrogant… why like us? If we just focus on a specific goal, we can probably get there much quicker!

Machine Learning is everywhere - John Bronskill

Architect Machine Learning & Perception - Microsoft Research Cambridge

Remember Paul the octopus? He predicted 85% of the German world cup soccer matches correctly. Alas, a few years ago Paul died, and perhaps it’s time for a replacement. A better way to predict. That’s what machine learning is: Using algorithms that learn from data. And machine learning is everywhere.

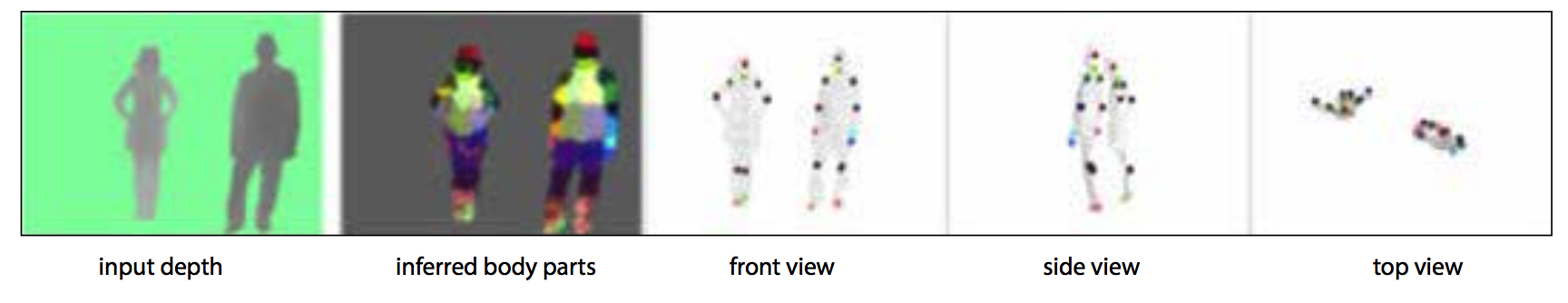

Figure 1 - Machine Intelligence analyzing Kinekt Images

Figure 1 - Machine Intelligence analyzing Kinekt Images

Machine learning is in online recommendation of Amazon, in fraud detection of credit cards and in preventive maintenance of elevators. It can predict if something on a medical scan is cancerous or not. We can use it to predict the sale price of a home, learning from previous sales: You feed it the size, number of rooms, distance to school etc. and through training, it builds a pricing model. And machine learning is of course in online search, matching the search terms to the best of millions of documents. Even after the results show, more learning takes place: depending on what you click, and if you continue searching or not, the results get continuously improved.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

The learning algorithms themselves are not very novel: logistic regression, random forest, and neural networks can be very effective but they need a large number of training examples. Getting labeled data can be manpower intensive. It doesn’t generalize well to new domains. That’s when you can put the human in the loop and create a hybrid system. An AI with supervision by humans, looking at how humans respond and learning from it. For example when we built out the capabilities of the Xbox Kinect sensor, to recognize body-parts, we didn’t start from scratch. We built a model of what humans look like, and used that as a starting point for training. This led to a very ‘smart’ system. Other cases are software development tools that serve exactly the right code examples at the right time or an email inbox that helps sort and prioritize email.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

Why is it now ‘suddenly’ so ubiquitous? There are a bunch of reasons: there is a lot of data being generated. A data explosion thanks to cheaper storage, connected customers and cloud computing make for powerful combination. And AI is moving to the cloud, with a Google prediction API, Amazon machine learning, IBM Watson Analytics and Microsoft Machine Learning. You can expect machine learning to start popping up in many more places, since it’s becoming extremely easy to integrate. So if you want to summarize where we are today, it’s very simple: Machines can learn.

The Internet is not the Answer - Andrew Keen

Entrepreneur and Author

What happened to the ambitions that we had? The great dreams of the Internet? Where did we end up? We always think technology is good and beautiful, but perhaps not… Today the power is in the hands of a few, who employ no people. What is the role of technology is society?

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

It’s actually a dumb question to think about it as these two things. It assumes that technology is a first mover, that through technology you get society. This is over simplistic: Technology is society, it came out of society. A lot of my work focuses on that. It’s not separate; it’s not something you impose on society. Technology does not exist outside of values. To understand it, you need to understand Silicon Valley. Technology always comes from specific social values, and one of the great problems with utopians is that they think the Internet was delivered to us like a reward. For example, they frame the network neutrality discussion as ‘taking neutrality away from us’, as if it’s a right. While in reality, the Internet has a specific, military, history that lies in the end of WW 2.

Ignorance, Inequality, Unemployment, Human Rights, Liberty. The architects of the early Internet all believed that the Internet would solve all these problems. Is the operating system of our networked society ‘the Internet?” The old operating system, of the previous age was a top down system. Capitalist, where the firm was very strong, and everybody worked for a firm. It was controlled from the top, through media.

This would be the new age, where everything changes. As profound a change as the industrial revolution, with radical changes in everything. First people believed that the Internet would solve everything. They thought that technology would solve it by itself, that the architecture of a democratic structure of nodes would take away the power of elites. Everybody would become entrepreneur, bloggers, videographer or citizen journalist. The hope was to make society a better place, solve ignorance, inequality, unemployment etc.

I’m a pessimist; it failed. What we see is the iron law of digital history: the nature of the network is that it enables new, larger monopolies. Worth almost a trillion dollars in mapping, search, video, mobile, … The same is true in every other sector: Amazon has no competition, certainly not in the west. While it’s not as simple as that the first mover wins, when a company wins its market, it becomes a monopolist.

The second problem is about unemployment. It’s an existential threat. We are defined as working species, defined by what we do. The only way to be cheerful without work is drugs. This technology is doing away with jobs. The famous Oxford university study states that 44% jobs will disappear, with no evidence that new jobs will come.

And there are other problems. This so-called ‘Free economy’ is in fact costing us our liberty, our privacy. Everything we do is being watched, mined, traded or sold. And the worst is yet to come; with self-driving cars and automated homes we will complete the surveillance society.

And there is ignorance: Internet was supposed to make us wiser, but instead we have the decimation of classic media and music industry. We’re depending on platforms without editors. We are less enlightened, more ignorant as we have created an echo chamber where our views are confirmed. The ultimate consequence is the selfie: technology is fragmenting us, alienating us. We’re glorifying ourselves. We can look at great historical paintings, but instead we take a selfie… instead of observing, we glorify our own existence. We put ourselves in the middle of the universe. We’ve gone back to a pre-Copernican society.

So what do we do? I hope that our fear will create a new social contract. At the moment it’s all about rights: individual rights, as Tailorism 2.0, where working for Amazon is so miserable that people want to commit suicide. We need to rethink the network: regulation is all that is important. We’ve seen this before with early capitalism. We were saved from that by the government, which has a critical role to play to solve these issues. For now, we’re not getting that from America, but we are getting it from Europe. We should respect and applaud the German government’s ‘right to be forgotten’, the European approach to privacy. But most importantly, we need self-regulation: this is a major moment in our species. We are being replaced by robots. Machine intelligence challenges who we are, the essence of what it means to be human. We need to empower ourselves, distinguish what it means to be human and do it really well. Otherwise we’ll end up in a ‘Brave new world’

Day 2 – Part Three: From Machine Intelligence to Corporate IQ

What is your corporate IQ? And can you improve it? Big data + natural language processing + machine vision + machine learning + cloud + natural language generation = Informed Intelligent decisions.

Machine Learning Monitoring - Goran Sandahl

Co-founder and CTO - Unomaly

We were founded in 2012, with the purpose to universally uncover incidents through smart algorithms. What we’re seeing is that most failures that we have are one of a kind, very rare or even unique. So instead, what we learn is the normal behavior of systems and software. Every piece of software is creating data when it’s running and it’s amazing how little of that data we use today. IDC said that only 1% is being used. What we want to do is use 100% of the data, so we can explore and know what’s happening in our infrastructures.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

How is this business relevant? The short-term answer: we are fighting hard when there’s a problem, to find the root cause. What changed, what is different? 20% of time in IT is spent diving into the black box of IT. Of more than 40% of incidents the cause is unknown. And there is another kind of invisibility: 69% of security incidents were discovered by 3rd parties (!). More tactically and strategically it’s about risk management: knowing what’s coming at you and what the impact could be.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

What we’re doing is tackling two hard problems. A car already has about 24 computers in it. Every system has different versions, generating different types of data. It’s hard to know what data is useful, which log to trace. Instead, we need to dynamically understand what data to choose.

Second: incidents are unique. They are unknown and dynamic. That’s where machine learning comes in. We take that streaming data of applications, infrastructure, mainframes, newborn systems, etc. Machine learning allows us to disregard any structure or typical ‘data quality’. It allows us to learn the structure as we go. We let it summarize what it learns. E.g. a windows machine: what does it do on daily or weekly or monthly basis? That profile is only 2 Mb, because it’s so repetitive. It creates the ‘normal’, and isolated anomalies immediately stand out. It allows us to zoom into the root cause of what happened. And it works. We run at places like nuclear power plants and the EU government. It works for both errors as well as intentional disruptions. And as Anton Chuvakin from Gartner said: “Even the most advanced hacker will leave traces in the log data’.

Machine Intelligence – Greg Ericson

CIO - Essilor of America & CEO - Optical Lab Software Solutions

Essilor is the world’s largest prescription lens-crafter with an international presence. We’re seeing interesting developments in machine intelligence in our business an in several parts of the company we’re starting to do things differently, enabled by state of the art technology.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

If I look across the chain, intelligence is many things. It’s sensing things, learning about them, acting, creating, interacting and sharing. There are many inspiring examples in all these areas. Contact lenses that sense your blood sugar, which can be life-saving for diabetics. Robots, like Baxter the learning robot who can follow instructions by training instead of programming. Automated trading computers that act autonomously etc. etc.

Similarly, we’re developing in all these areas too: Instead of simple eye-charts, we now create machines that use up to 20 measurements to find out how you look and focus, leading to much more precise prescriptions. Our websites self-optimize, our online ads are computer-managed. We use new technology and experiment with 3d printing to allow customers to create new designs interactively. We even offer virtual try-out options where you can see before you buy how glasses would look on you and what the world would look like through the glasses that you’ve selected, including lightly tinted glasses and all.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

We have to prepare for the Machine Intelligence revolution, and the best way to do this is to adopt a bi-modal capability, using ‘Agile’ for rapid innovation. We set aside time for team members to self-organize and innovate. For example, we have a quarterly hackathon that has been hugely successful. To accelerate even further, I’ve concentrated and funded skilled leaders. We created an innovation team with specific charge to identify and drive Machine Intelligence initiatives. All with the aim to get smarter faster and stay ahead of the competition.

When are you @home – Marcel Krom

CIO – PostNL

The Dutch postal law says that we are not allowed to open envelopes. That is not helping us. Competition for us is not other mail companies, but digital: Google reads your messages and gives us advertisement. Likewise, it would be great if the mailman could open your message and read it to you. Privacy is a weapon that can be used in competition. We need to have the discussion about privacy regulation and it needs to be a discussion about privacy in the western world. It will only works if regulation is globally coordinated.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

We are an old company, 200 year old. Much of the information we’ve gathered is hidden, and cannot be used. As a CIO I want to change that, get a grasp of the information we have in our company and use it. Post is declining 10% per year. On the other side, parcels increase 7 to 8% every year. To optimize, it would already be great if we would know when people are at home. We could know this, based on previous delivery efforts, and we could deliver when you’re at home. The biggest cost for us is when we deliver a package or registered message when you’re not at home.

We are restructuring, refocusing to address the changes we’re facing. We have an all-cloud strategy (now 80% there), we need to go faster every day. We do analytics to optimize routing, we experiment with tracking people (if only the unions would allow it), we break barriers, put sensors in, create platforms to enable senders and receivers to connect, become event driven, … anything to be agile and relevant.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

In logistics, up to 48% of jobs will disappear through robotization, new software for call-centers (robotic answers), enabled by data, internet of things, machine learning, mobile robotics, autonomous cars, drones, p2p business models, sharing economy, mobility, social networks, cloud computing, process redesign, standardization, track&trace, planning algorithms and all other algorithms. We are looking closely at P2P mail startups. They will challenge us. Are we ready for this? We need to be. We need to be intelligent like Leonardo da Vinci, Empathic like Mandela, fit like Messi and passionate like Martin Luther King.

Day 2 – Part Four: Life in the Age of Intelligent Decision Machines

What will society look like when intelligent decision machines are all around us? How do we redesign the economy?

Tradenet: A possible Blockchain future - Mike Hearn

Bitcoin Developer and Futurist

What would happen if you give autonomous robots access to digital money? Before we dive into a future scenario, note that nothing in this talk assumes any new scientific breakthroughs: The technology for everything I talk about could be built today (or perhaps next year) by a sufficiently motivated programmer.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

Let’s imagine Annie, living about 50 years into the future. She get’s up in the morning, get ready to go out and sees a self-driving car outside. The interesting thing is that she didn’t order it. Her smartphone and computers learned her daily schedule and start to predict. Cheap or free labor makes new things possible. In this case: it enables ‘speculative customer acquisition’. It’s a gamble on the side of the taxi.

Annie lives in a skyscraper. Outside her window is a flat ledge that she can reach, on which drones can drop parcels. Computers in her home speculatively order things that get delivered. She can accept these things and take them in, or otherwise they simply get picked up again.

In Annie’s world, technology went through a troubled history: who owns the technology? How are prices set? Technology is easily monopolized: Robot taxis? Big brother is watching you! What happens if Google somehow stops service in a country (a trade ban perhaps?): do the automatic cars stop driving? What if there would be a ‘non-drive list’, similar to the no-fly list of today? Or no-drive zones, where an authority could steer people away from places?

A solution to these questions was found, that perhaps seems unintuitive weird: Capitalism 2.0. In case of the taxi: Annie’s taxi has no owner. It finds and trades with humans as equals on the ‘TradeNet’. It can hire humans for repairs or upgrades, and resist hacking attempts. To be truly autonomous, the taxi has to find a way to store money in an inaccessible way. And best of all: it set it’s own prices.

In our present time, opening a bank account for a robot is pretty hard. Anti-laundering laws require account to be owned by humans. But what about bitcoins and the Blockchain?

‘Tradenet’ (for now fictitious) is an infrastructure where you can publish requests, reverse buying, collect offers. On it, man and machine can trade as equals, with perhaps some kind of quality control, standardized digital contracts and repetitive business protocols (for taxis, shipping, ports, 3d printing, drone delivery etc). There were some issues in the beginning: robots were first easily abused and they only got legal rights in 2048. But now, Annie loves agents! You can trust them completely, since reading their code is like reading their mind. In 2065 everyone can read and write code as this is taught in schools.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

Other things happened. For example in 2030, SendMate became the first open source company. It guarantees privacy when sending messages. It employs humans, but has no CEO: its management is entirely automated. Only for example the call-center is manned by independent contractors. Or what about a virtual experience cinema, run by algorithms? Annie can even improve how the cinema operates by adjusting the code and selling it back. The cinema would then test-run it, and, when it works better than what they had, use it. Perhaps giving Annie some bitcoins for her efforts…

Post Capitalism – Paul Mason

Journalist, Economist and Author

Why have utopian experiments of the past centuries failed? Because there was scarcity. Yet now we’re entering an age of abundance. Where the micropayments suggested by Mike Hearn make things very different. But even they may become obsolete. We are in a transition: we need to recognize this transition and – also in the light of social justice - we need to promote it.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

Information goods are different. They are different because of the simple act of CRTL-C, CTRL-V. Copy and Paste. You can reproduce at almost no cost. Once you have an award winning hip-hop record, reproduction and distribution is practically free. There is zero marginal cost. And this is something new: All the books about economics are about scarcity. There is nothing in the economic world that is not scarce.

You could call it the zero marginal costsociety. Well, unless of course monopolies, patents and defensible IP try to prevent ‘copy-paste’. Forming a monopoly has been the corporate response to digital. In each of the sectors in the tech world there is a ‘big one’ and they only compete where they touch, on the outer edge. There is one Uber, one Google, one AirBnB, …

There is a significant effect of IT on the structure of capitalism. In sector by sector it’s dissolving the price-mechanism. It dissolves to the point where you don’t even need micropayments anymore. And the result: a systematic under-utilization of information. In a society where there is ‘free’, the way we think about wages, rewards and prices needs to be reinvented.

Wages and ‘hours of work’ become decoupled - Our parents made money because work was linear and hierarchical. Now we live in a non-sequential, non-hierarchical, networked society. It becomes pointless to time work. This happens in many places: for example staff that is cleaning office buildings that report their hours by text message, and nobody really checks what they did. Why? Because their job doesn’t really matter, they are bullshit jobs. We’ve created jobs at the lower end of the spectrum that don’t really need to exist. Glass buildings need no painting or fixing. A now famous Oxford study stated that 47% of all jobs could be automated. What do we do with the people? We have to remove the correlation between time and money.

Remember that information is also physical. There is no information that does not exist in reality as well. This digital ‘layer’ of reality, of the economy, keeps expanding. You can look at art galleries with an overlay of ‘expert explanations’, airplanes that pump out massive information streams. IoT is often cited, because it allows us to utilize capacity fully that we weren’t capable to do before. Everything that needs information to done, to produce, can be done much cheaper. Or free.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

So what is a possible transition path? We can expand collaboration, promote the ‘zero cost’ society. We can reduce the unnecessary labor and promote work/wage decoupling. Our aim should be, in the information revolution, to reduce the amount of work that needs to be done to get closer and closer to zero. But what to do with people if there isn’t enough work for people to physically work? This is where ‘basic income’ comes up as a concept: give people a steady income regardless how much they work.

The takeaway of all this: Capitalism is a complex, adaptive system – and information technology suppresses the adaption reflex. The idea is that we automate low-skill, low value work and replace it by high-value work. Information removes value, makes certain things free, and it’s hard to envision the creation of these oft mentioned high-value jobs. Therefore it would be better to stop thinking about that, it’s an illusion. For example, you can expect companies like AirBnB or Uber to be replaced by local ‘free’ alternatives that are not extracting fees but that are truly free. We need to prepare for that now.

Intelligence from Machines – Luciano Floridi

Professor of Philosophy and Ethics of Information - University of Oxford

Let’s be realistic. Where are we with AI? AI is an interface. It’s something between one state and another. Between long and short grass, between dirty dishes and clean dishes, between English and German. That’s why people are worried about their jobs: if you are the one doing this conversion, it may no longer be needed. And we look at Science Fiction and imagine what is coming. But is it really coming? Are we worrying for nothing? Proponents use several arguments to convince us that strong AI is coming. Let’s run through the type of arguments and see what we’ve got:

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

IF THEN – IF AI is coming, THEN…. But for now, there is no strong AI, so when the premise is not there, there is no ‘THEN’. If the four horsemen of the apocalypse are here, the end is near… except there are no horsemen.

COULD - This is really something for the first week for an undergraduate of philosophy. There are two kinds of ‘could’: ‘I could be sick with the flu in a couple of weeks’ or ‘I could win the lottery’. This second could, while it’ s possible that a former prince from Nigeria is really sending you a million dollars, is so unlikely it’s not worth thinking about it. AI today, is ‘possible’ as a lottery ticket, not as ‘flu’.

EXPONENTIAL - Compare it to climbing up the tree as a first phase of going to the moon. Most growth is a logistical curve: it starts slowly, it goes up and it reaches a plateau. The same thing with AI: My Roomba cleans the floor better and better, but it still cannot ask me if I like a coffee.

SOONER OR LATER – Do you ever notice? As soon as people start bombarding you with data, they are trying to sell you something. There are so many different types of intelligence, but we still cannot compare human intelligence to machine intelligence. ‘Sooner or later’ is fiction.

SAFE > SORRY – It’s a good argument, but if you believe it, you should also wear a metal helmet to prevent Martians from reading your mind.

WRONG BEFORE – This is a strong one: it cuts through any check. The most impossible unlikely scenario can be argued with this: just remember, we have been wrong before! It’s a fallacy.

EXPERT SURVEY - What about asking the experts? Well, there is a problem there: Are AI experts perhaps in their profession because they believe in the possibility? They are paid for saying it. it’s their job! Scientifically speaking it’s disturbing to rely on people with such a strong bias.

NOT YET – We’re really grasping at straws now. The scientific answer is clear: terminator, singularity stuff is pure SciFi. Nobody is even close to passing the Turing test.

Sorry, this content can only be visible if Functional Cookies are accepted. Please go to the Cookie Settings and change your preferences.

The real challenges around AI are not if we’ll have strong AI or not, but:

Replaceable agency: if we are the interface between the GPS and the car, between long grass and short grass, between one state and the other, and that interface can be automated, you will be replaced. This is much more likely scenario than complete AI.

Predictable freedom. Humans have freedom, but we seem to always do the same: we always buy the same toothpaste. What good is freedom if you don’t use it?

Influentiable autonomy. Even worse, we may be easy to influence. Perhaps we can use the machines to influence people. We believe we are autonomous but meanwhile…

Dependent delegation. This is a big risk. As we increasingly rely on technology, we have to know how reliable this infrastructure really is. What infosphere are we building?

The antidote to all this? Three simple principles to guide you:

Think deeper, Design better, Be mindful.

- Michiel BoreelGroup Chief Technology Officer of Sogeti

+31 347 22 10 02

Michiel BoreelGroup Chief Technology Officer of Sogeti

Michiel BoreelGroup Chief Technology Officer of Sogeti

+31 347 22 10 02

Available for download:

After the event

Available for download:

- Summit 2014 report

- Summit 2014 iBook (open on an iOS device only)

- VINT Design to Disrupt, an Executive Introduction report

Available for download:

![[Missing text '/pageicons/altmail' for 'English']](/Static/img/email.png)