The Synthetic Future: Virtual Influencers of Today Give Us an Insight Into Tomorrow

The media wisdom of this generation, combined with political and technological solutions, will have to guide us into the synthetic future...

In his latest blog SogetiLabs researcher and co-author of 'The Synthetic Generation' report from our Digital Happiness Series, Sander Duivestein explores concepts of authenticity, originality, and reality—devices virtual influencers regularly play with to progress their fictional storylines.

Authenticity, the word that so often appears in studies of this generation, is under pressure. Authentic means of undisputed origin, not of a copy, genuine. Following real people, observing the world through the eyes of the so-called influencers, has everything to do with the desire for authenticity. At the same time, the world being looked at is almost never real.

Photos, videos and comments are quickly manipulated for someone’s gain. This generation grew up with this media manipulator. In this context, we look ahead to the possibilities that the media offers to make even more use of our own power. Initially, our interest was aroused by the rise of so-called CGI (computer-generated imagery) influencers.

Behind this an industry of “digitized people” is emerging, providing models or avatars for real-life chatbots; artificial intelligence gets a face with these virtual people. What is real and what is fake will be increasingly difficult to unravel. An alarming conclusion perhaps. But we stick to the fact that this generation is better able to handle this illusion. After all, they grew up with it.

Easy to Trick

If we look ahead through the lens of technology, we can see that finding out the truth is becoming increasingly difficult. What is real and what is fake? The truth can easily be manipulated. A picture of Justin Bieber eating a burrito on a bench in a park across the street went viral recently. It turned out to be a lookalike (Brad Sousa). Everything was staged to warn people about fake news. Recordings of CNN reporter Jim Acosta, who was banned from the White House, also went viral. The video was only manipulated a little, sped up at some point and then slightly slowed down. This made it seem as if the reporter behaved a little more aggressively towards the press officer.

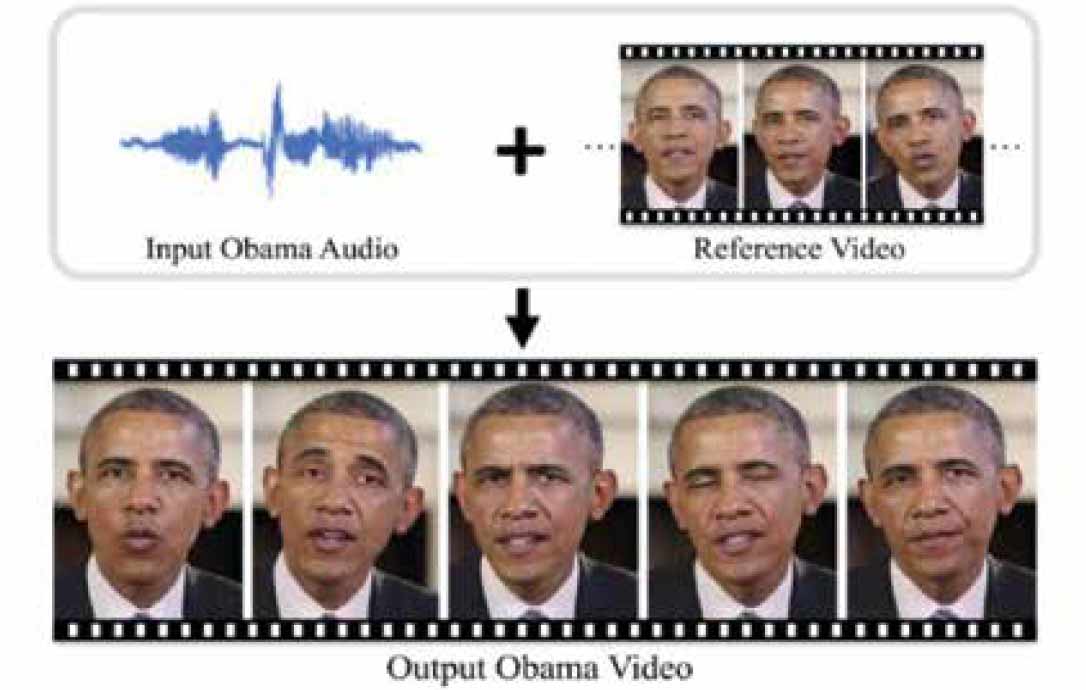

The real and fake game does not necessarily need advanced technology to trick us. But the possibilities to manipulate voice, video and faces in such a way that it is impossible to distinguish fake from real are increasing. Is the sender of a communication still who he or she says they are? Is it a human being or a computer? Is the voice original or imitated and does it seem lifelike? The article “Synthesizing Obama” from the University of Washington explains how real and fake are becoming increasingly difficult to disassemble. Original images and texts are used as a basis to create a computer-generated synthetic version of Obama that can then put any words in his mouth.

From a technological point of view, imitations of real people are becoming increasingly lifelike. Cloning your voice using online tools such as Lyrebird, Voicepods or Deep Voice has become easier and easier. Live images can now be projected in real time in virtual spaces. Soon, vloggers will no longer have to leave their homes. Ami Yamato is a confusing example of how real and fake can be confused. Yamato’s video channel is hence called “videos that confuse people”. You can clearly see that the vlogger is an animation, but she runs through the streets of London, which are real.

The new standard for lifelike has been set by the agency Magic Leap, which showed the virtual assistant Mica to the world in October 2018, a human avatar who can be admired with augmented reality glasses. Those who put on the augmented-reality glasses, entered into a space where Mica looks you questioningly in the eyes and suggests certain music, according to your mood.

In a remote room people were given the opportunity to sit one on one with Mica at the table. Although Mica didn’t speak, she could make it clear what she wanted. There was a picture frame on the table, and with a few hand gestures it was made clear that it had to be hung on the wall. As soon as the bare frame hung from a nail, Mica touched it with a finger and the famous painting Ceci n’est pas une pipe by the Belgian artist Magritte appeared, illustrating that nothing is what it seems. Gigglingly Mica then took the pipe from the painting, stuck it in her mouth and disappeared behind a wall.

The Real-Fake-Quadrant

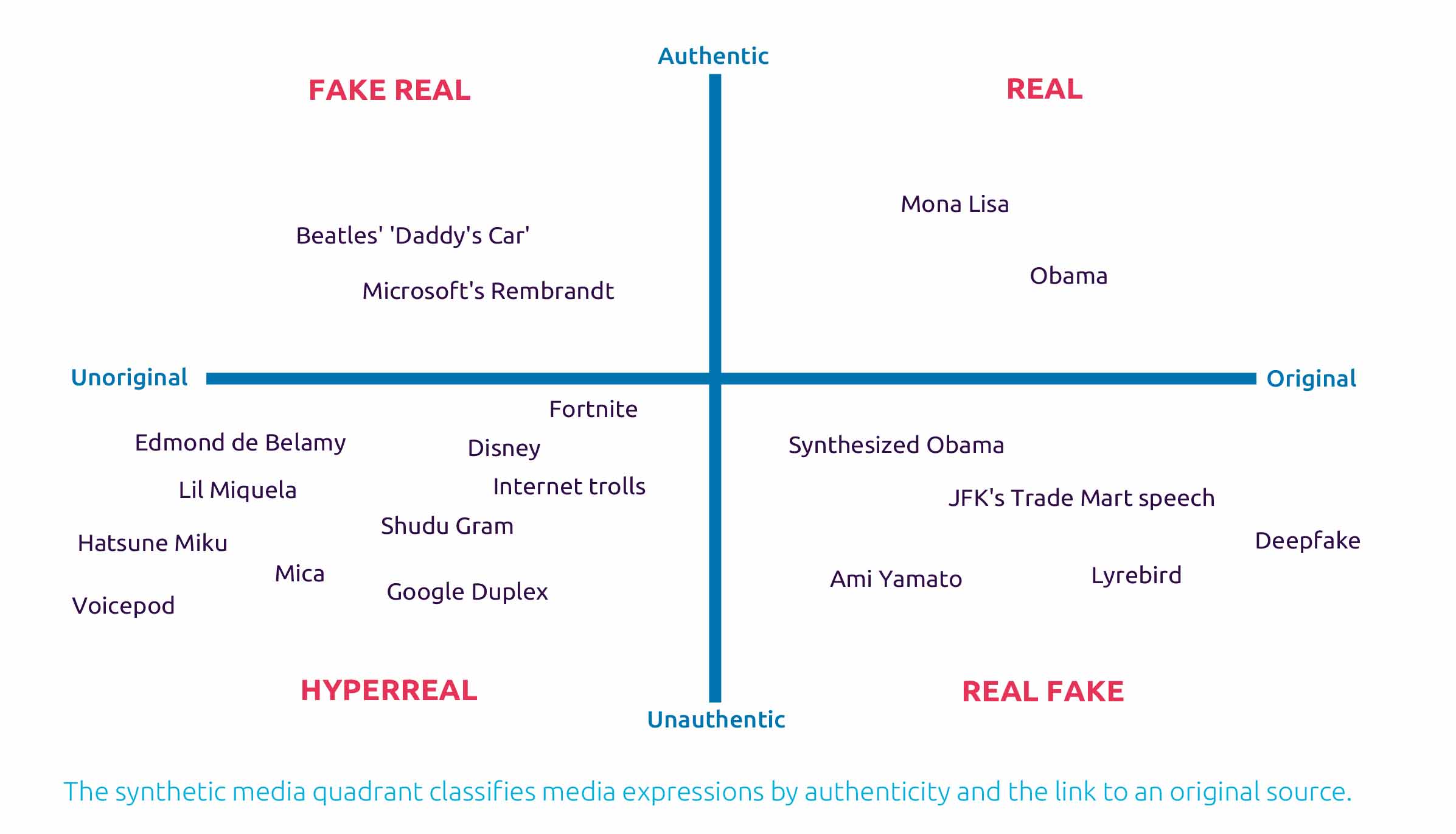

In his article Artificial Intelligence, Deepfakes and a Future of Ectypes, information philosopher Luciano Floridi asks the question where technology can still stretch the boundaries of reality. He introduces the word “ectype”, a Greek word for a copy that still has a special relationship with the original, but is not “the real thing”. CGI influencers are an example of ectypes: the original is human, but they are not “the real thing”. They are hyper-real, inspired by man, but can never be traced back to which unique man exactly.

Paintings by famous master forgers can also be authentic, as long as you know who the forger is. Some of these paintings are even worth a lot of money. For example, Microsoft’s artificial intelligence produced a painting by Rembrandt that he never painted, but is based on his style of painting. It is an authentic painting, but an “unoriginal” one, because the original does not exist.

Just like the Beatles song “Daddy’s car”, which is a one hundred percent product in the Beatles style, but was not written by the Beatles themselves. It was written by an AI that listened to the Beatles’ complete catalog and then composed the song itself. However, there are traces of the original source in this new song. For the “real” quadrant it is a piece of cake to find out the truth. We stand face to face with the original and recognize it. The “real fake” is able to sow the most confusion. It is originally the one human being, but media manipulation can put anything it wants in the mouth of the fake.

At Google Duplex, Google’s voice falsifier able to make an appointment for you at the hairdresser, was far from authentic when it presented itself as a real person in a conversation with the hairdresser. After much criticism in newspapers, magazines and social media Google intervened and ensured transparency: in the new demo Duplex starts the conversation by making it known that the listener is talking with a chatbot. Google Duplex is authentic when it communicates it is a program, a “real fake” example, but when it presents itself as real human, it belongs to the “hyperreal”.

In an article for The New York Times Magazine, author Max Read makes the point that everything that happens on the internet is fake: from statistics to people to companies to politics. The dividing line between what is fake and what is real has, as far as he is concerned, been crossed. He calls this “The Inversion.”

The Rise of Hyper-Real Influencers

Real influencers of flesh and blood now have a competitor: the computer variant. The best known fake influencer is Lil Miquela Sousa, a 19-year-old Brazilian-American model. She’s not real, but a real-life simulation that is hard to distinguish from real. She has a freckled face, full lips and two little buns on her head.

Miquela is a computer animation developed by the company Brud, which specializes in robots and artificial intelligence and their application in digital media. Together with her fellow virtual influencers Bermuda and Blawko, and her competitors Noonoouri and crowdsourced pop star Hatsune Miku, she blurs the line between reality and social media, where reality is hard to find as it is. Lil Miquela is “an exaggerated version of the beauty standard” typical of Instagram.

Miquela wears expensive designer clothing, gets backstage passes for exclusive events, has been on the covers of two renowned magazines, temporarily took over Prada’s account to promote their new clothing collection and released a well listened single “Not Mine” via Spotify. She also stands up for transgender rights, supports the LGBT+ community and supports the Black Lives Matter movement. In short, she leads a perfect life that teenage girls worldwide dream of and represents all the values that this new generation loves so much. Marketers love it too, because it is easier to control CGI influencers than real people.The risk that your brand will be damaged if you get into trouble with a real influencer is much greater.

According to many, authenticity is a prerequisite for success as an influencer. Does the poster look authentic? Is the match with a brand logical? How “real” or “fake” is the other person? According to philosopher Joep Dohmen, the concept of “authenticity” has become very complex in our time. People experience it as a task to be true to themselves, but at the same time have no clear criterion by which to measure it or what it exactly means to be oneself. At the same time they are judged on their authenticity on social media.

Top photographer Cameron-James Wilson went one step further with his creation Shudu Gram. His digital character is the world’s first digital supermodel. With its striking appearance, Shudu Gram now has more than one hundred thousand followers. “We live in such a filtered world that everything that is real becomes fake,” says Wilson about his creation. “Our society is just incredibly fake and I want to mix up this “real” and “fake”.” Lil Miquela has something to say about this herself: “I think we are at a time when it is becoming increasingly difficult to find authenticity.

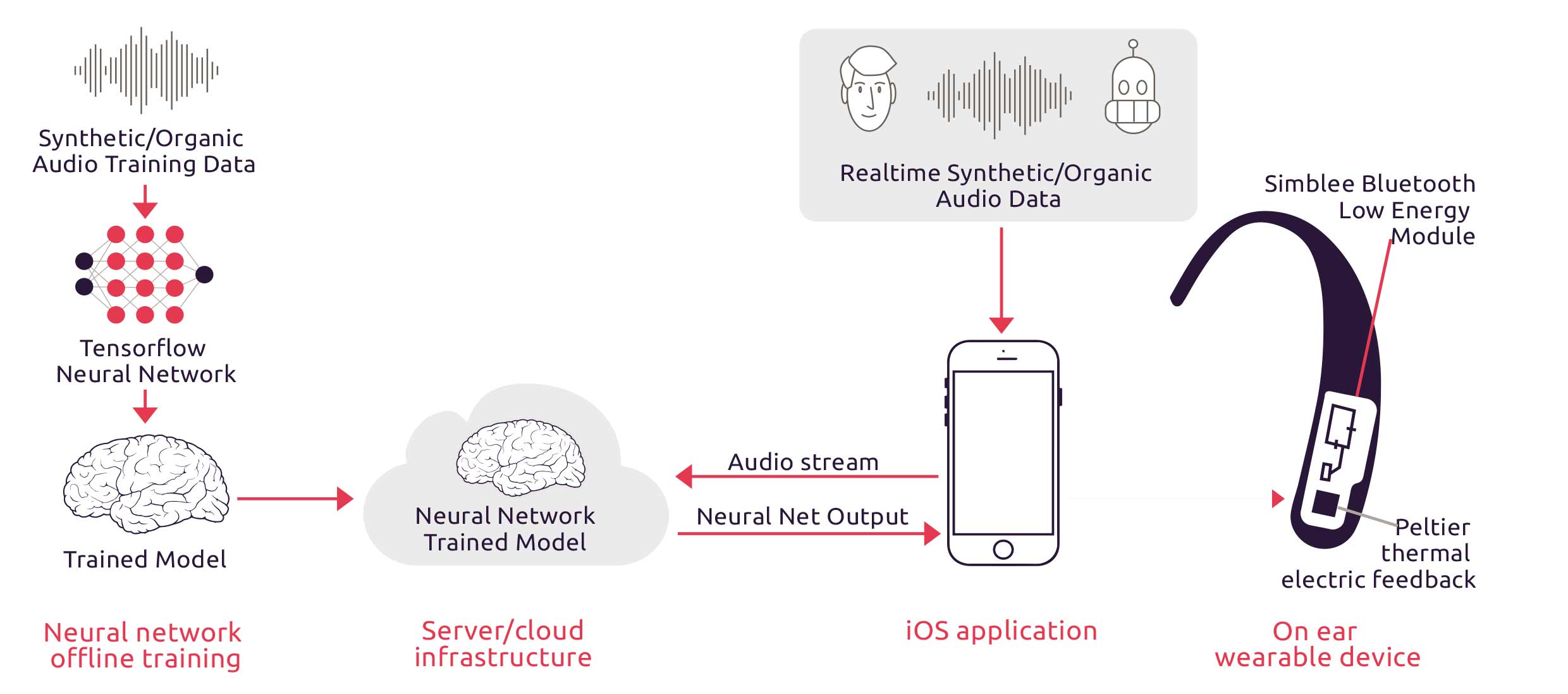

People can interpret and project any desired meaning onto the images they see on Instagram. Regardless of those different interpretations, I have created real friendships and relationships with people online. ”As with any problem created by technology, a technological solution is emerging. The Anti Ai project offers artificial intelligence as a solution to quickly detect fake information. A neural network trained on tensor flow recognizes synthetic media, such as Obama’s voice from our example. As an interface to warn us we can get thermal feedback – literally cold chills – if we are fooled.

Armed Against the InfoCalypse

What happens in the end when we no longer know whether what we see or hear is authentic, when we can no longer distinguish between real and fake? Aviv Ovadya, Chief Technologist at The Center for Social Media Responsibility, talks about an upcoming InfoCalypse. We spoke to him in Chicago, where he told us that if we don’t take this problem seriously, we get bogged down in a reality-apathy because we have to constantly ask ourselves whether something is true or false, manipulated or authentic. At the same time, we are dealing with a synthetic generation that grew up with this ambivalence of real and fake. They are experienced experts with a good bullshit detector.

Just checking a photo of someone on Google (with the reverse image search function of Google) before you go on a date is quite common. Is this person real? Just a fact check. Will the synthetic generation find the answers to these problems? It looks hopeful, but more help is likely to be needed. The solutions that both Luciano Floridi and Aviv Ovadya offer to this problem are new legislation and technology itself. For example, the artificial intelligence of Anti Ai Ai can warn us whether we are dealing with a human being or a robot, and in our previous report (In Code We Trust) we gave examples of blockchain applications aimed at eliminating fake news.

The European Union has now come up with an action plan against disinformation, focusing in particular on Russian fake news and the European elections. In 2019, the executive service, the European External Action Service (EEAS), hired about 50 new employees and more than €3 million more to carry out its tasks. In addition to this monitoring function, the information industry must comply with codes of conduct. Fake news producers should be restricted in this way. Tech platforms have much more to explain about how they operate and give fact checkers access to their platforms.

The advertising industry itself is working on new standards. In the UK, for example, the Advertising Standards Authority (ASA) has published an Influencers Guide to educate influencers about advertising rules. The aim is to make influencers’ advertisements authentic and engaging within the boundaries of what is permissible. In short, the media wisdom of this generation, combined with political and technological solutions, will have to guide us into the synthetic future.